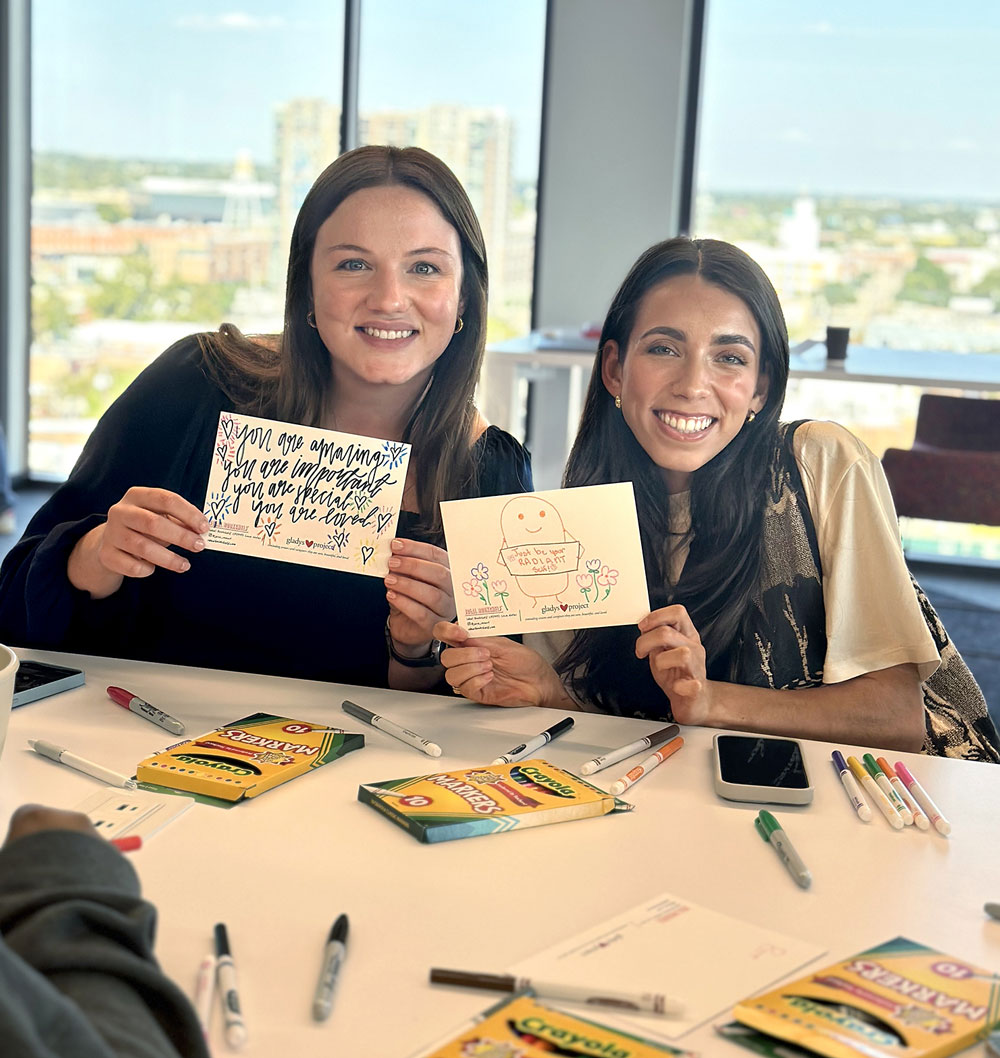

Fiercely independent since our founding, we remade ourselves in 2021 as a nonprofit-owned and people-run enterprise. The first part means just that: our agency is owned – though not run – by a nonprofit organization. This arrangement ensures that we can never be sold and will never be distantly managed. People-run? That means we encourage new voices, foster diverse thinking, and promote our best ideas regardless of origin. We embrace inclusion in our hallways, in our hiring, and especially in our thinking.

We love

to create.

We live

to create.

We love

to create.

We live

to create.